Digital Twin 101

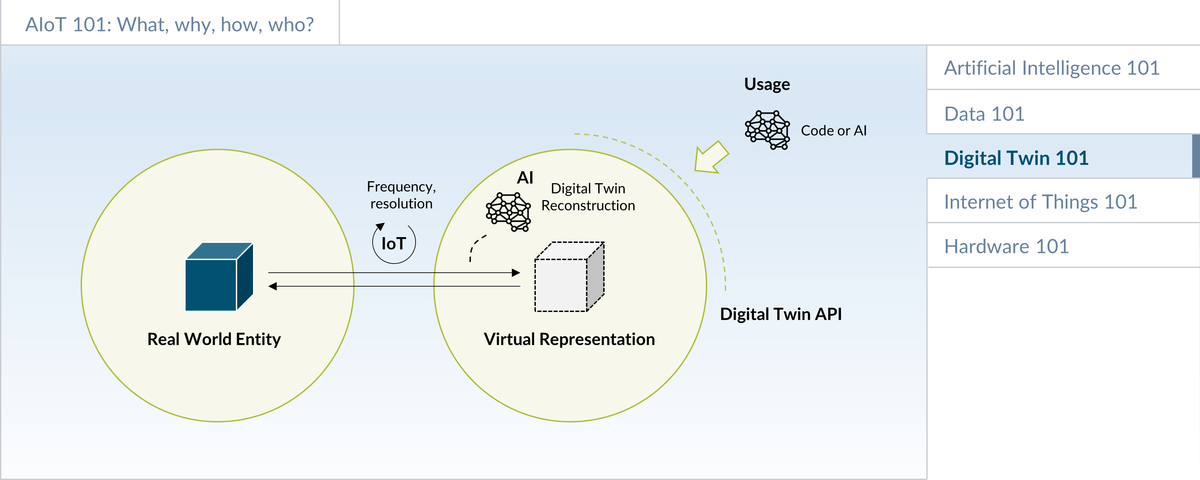

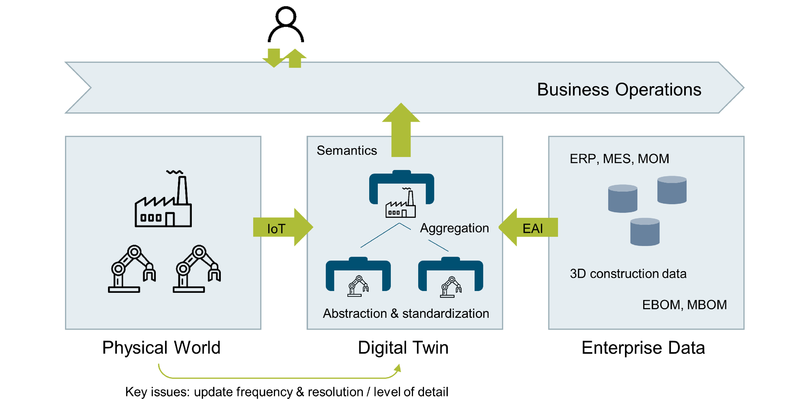

Digital Twins are a digital representation of real-world physical entities. They help manage complexity and establish a semantic layer on top of the more technical layers. This in turn can make it easier to realize business goals and implement AI/ML solutions using machine data. This section provides an overview, some concrete examples, as well as a discussion in which situations the Digital Twin approach should be considered for an AIoT initiative.

Introduction

There are multiple "flavors" of digital twins. The Platform Industrie 4.0 places the Asset Administration Shell at the core of its Digital Twin strategy [1]. Many PLM companies include the 3D CAD data as a part of the Digital Twin. Some advanced definitions of Digital Twin also include physics simulation. The Digital Twin Consortium defines the digital twin as follows: "A digital twin is a virtual representation of real-world entities and processes, synchronized at a specified frequency and fidelity. Digital twin systems transform business by accelerating holistic understanding, optimal decision-making, and effective action. Digital twins use real-time and historical data to represent the past and present and simulate predicted futures. Digital twins are motivated by outcomes, tailored to use cases, powered by integration, built on data, guided by domain knowledge, and implemented in IT/OT systems" [2].

The Digital Playbook builds on the definition from the Digital Twin Consortium. A key benefit of the Digital Twin concept is to manage complexity via abstraction. Especially for complex, heterogeneous portfolios of physical assets, the Digital Twin concept can help to better manage complexity by providing a layer of abstraction, e.g. through well-defined Digital Twin interfaces and relationships between different Digital Twin instances. Both the I4.0 AdminShell as well as the Digital Twins Definition Language (DTDL) [3] are providing support in this area.

Depending on the approach chosen, Digital Twin interface definitions often extend the concept of well-established component API models by adding Digital Twin specific concepts such as telemetry events and commands. Relationships between Digital Twin instances can differ. A particularly important one is the aggregation relationship, because this will often be the foundation of managing more complex networks of heterogeneous assets.

The goal of many Digital Twin projects is to create semantic models that allow us to better understand the meaning of information. Ontologies are a concept where reusable, industry-specific libraries of Digital Twin models are created and exchanged to support this.

Example

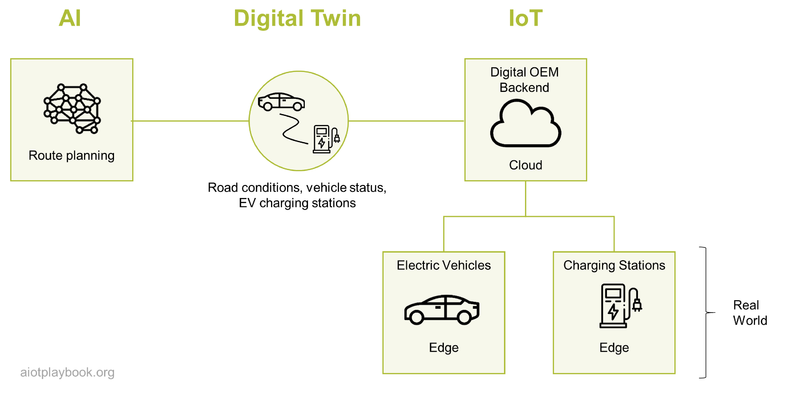

A good example of a Digital Twin is a system that makes route recommendations to drivers of electric vehicles, including stop points at available charging stations. For these recommendations, the system will need a representation of the vehicle itself (including charging status), as well as the charging stations along the chosen route. If this information is logically aggregated as a Digital Twin, the AI in the backend can then use this DT to perform the route calculation, without having to worry about technical integration with the vehicle and the charging stations in the field.

Similarly, the feature responsible for reserving a charging station after a stop has been selected can benefit if the charging station is made available in the form of a Digital Twin, allowing us to make the reservation without having to deal with the underlying complexity of the remote interaction.

The Digital Twin in this case provides a higher level of abstraction than would be made available, for example, via a basic API architecture. This is especially true if the Digital Twin is taking care of data synchronization issues.

Digital Twin and AIoT

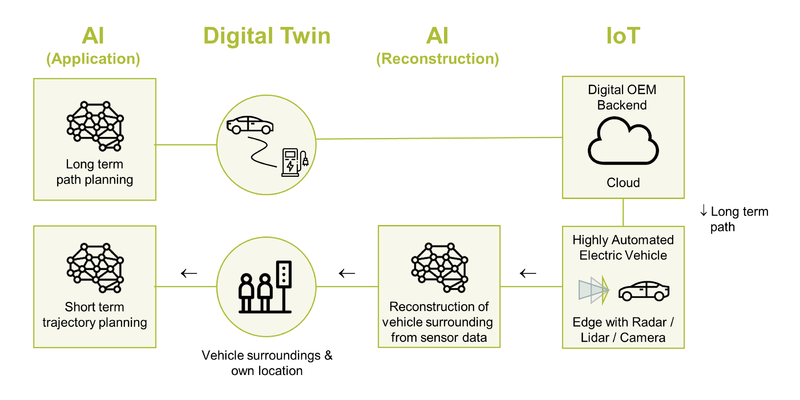

In an AIoT initiative, the Digital Twin concept can play an important role in providing a semantic abstraction layer. The IoT plays the role of providing connectivity services. AI, on the other hand, can play two roles:

- Reconstruction: AI can be an important tool for the reconstruction process; the process of creating (or "reconstructing") the virtual representation based on the raw data from the sensors.

- Application: Once the Digital Twin is reconstructed, another AI algorithm can be applied to the semantically rich representation of the Digital Twin in order to support the business goals

Example 1: Electric Vehicle

The first example to demonstrate this concept is building on the EV scenario from earlier on. In addition, the DT concept is now also applied to the Highly Automated Driving Function of the vehicle, which includes short term trajectory and long-term path planning.

For short-term planning, a digital twin of the vehicle surroundings is created (here, the AI supports the reconstruction of the DT). Next, AI uses the semantically rich interfaces of the digital twin of the vehicle surroundings to perform short-term trajectory planning. This AI will also take the long-term path into consideration, e.g., to determine which way to take on each crossing.

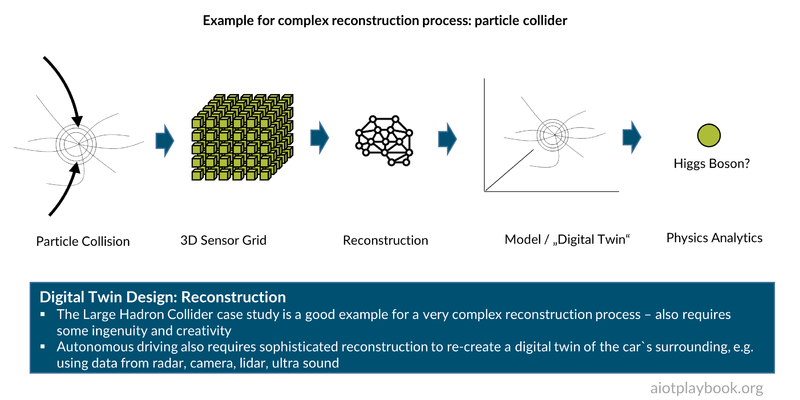

Example 2: Particle Collider

The second example is a particle collider, such as the Large Hadron Collider at CERN. The particle collider uses a 3D grid of ruggedized radioactivity sensors in a cavern of the collider to capture radioactivity after the collision. These data are fed into a very complex tier of compute nodes, which are applying advanced analytics concepts to create a digital reconstruction of the particle collision. This Digital Twin is then the foundation of the analysis of the physical phenomena that could be observed.

DT Resolution and Update Frequency

As mentioned earlier, key questions that must be answered by the solution architect concern the DT resolution and update frequency.

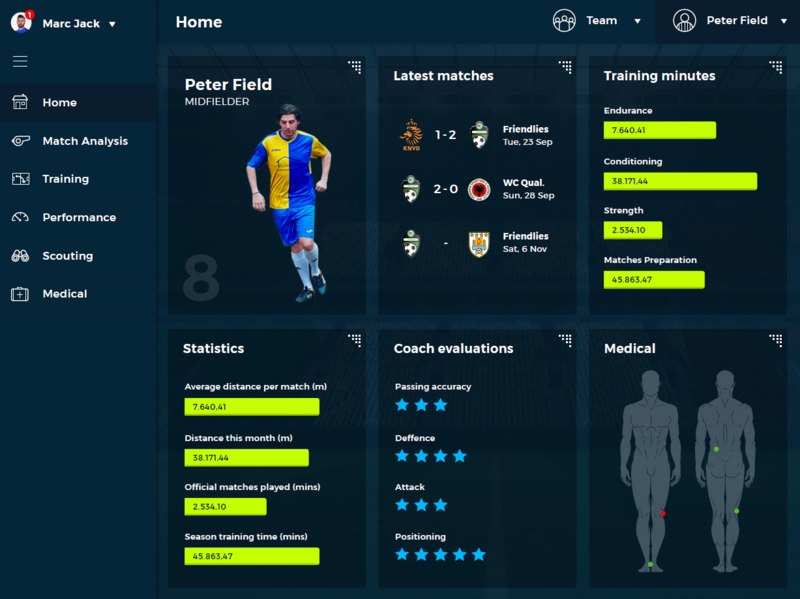

A good example here is a DT for a soccer game. Depending on the role of the different stakeholders, they would have different requirements regarding resolution and update frequency. For example, a betting office might only need the final score of the game. The referee (well, plus everybody else playing or watching) needs more detailed information about whether the ball has actually crossed the line of the goal, in case of a shot on the goal. The audience usually wants an even higher "resolution" for the Internet live feed, including all significant events (goals, fouls, etc.). The team coach might require a detailed heat map of the position of each player during every minute of the game. Finally, the team physician wants additional information about the biorhythm of each player during the entire game.

Mark Haberland is the CEO of Clariba. The company is offering custom tracking and analytics solutions for soccer teams. He shares the following insights with us: Football clubs around the world are striving to achieve a competitive advantage and increase performance using the immense data available from the use of digital technology in every aspect of the sport. Continued innovation in the application of sensors, smart video analytics with edge computing, drones, and even robotics, streaming data via mesh WIFI networks and 5G connectivity is providing incredible new capabilities and opportunities for real-time insights. Harnessing this ever-increasing amount of data will allow forward-looking clubs to experiment and to innovate and develop new algorithms to achieve the insights needed to increase player and team performance to win on the pitch. Investing in new technologies, building in-house capabilities and co-innovating with partners such as universities and specialized companies savvy in AIoT will be a differentiating factor for football organisations that want to lead the way.

So there are a number of key questions here: How high should the resolution of the DT be? And how can a combination of sensors and reconstruction algorithms deliver at this resolution?

For individual goal recognition, a dedicated sensor could be embedded in the ball, with a counterpart in the goal posts. This would require a modification of the ball and the goal posts but would allow for very straightforward reconstruction, e.g., via a simple rule.

Things become more complicated for the reconstruction process if a video camera is used instead. Here, AI/ML could be utilized, e.g. for goal recognition.

For the biorhythm, chances are that a specialized type of sensor will be somehow attached to the player's body, e.g., in his shorts or t-shirt. For the reconstruction process, advanced analytics will probably be required.

Advanced Digital Twins: Physics Simulation and Virtual Sensors

To wrap up the introduction of Digital Twins, we will now examine advanced Digital Twins using physics simulation and virtual sensors. A real-world case study is provided, which is summarized in the figure following. Two experts have been interviewed for this: Dr. Przemyslaw Gromala (Team leader of Modelling and Simulation at Bosch Engineering Battery Management Systems and New Products) and Dr. Prith Banerjee (Chief Technology Officer of ANSYS, a provider of multi-physics engineering simulation software for product design, testing and operation).

Dirk Slama: Prith, can you share your definition of Digital Twin with us?

Prith Banerjee: We are looking at Digital Twins during the design, manufacturing and operations phase. Let's take a car motor as an example. You start by creating a CAD model during the design phase. Using the ANSYS products, you can then also create a physics simulation model, which helps you get insights into the future performance of the motor. Then, after the motor has been manufactured, you are entering the operations phase. During operations, sensors are used on the asset to measure key operational indicators, e.g., vibration and temperature of the motor. You can then calibrate the as-designed value of the asset to match the as-manufactured and as-operated values. In some cases, you might not be able to put real sensors in all the places where you need them, either because it is too costly, or technically not feasible. In this case, you can derive a virtual sensor from the physics simulation model. Combining real sensors with virtual sensors can get you a Digital Twin which represents the real world with a very high level of accuracy.

Dirk: Thanks. Przemyslaw, you are working on applying these concepts to high power inverters for electric vehicles. Can you start by explaining what a high-power inverter is, and what use cases you are seeing for advanced Digital Twins?

Przemyslaw Gromala: An inverter for electric vehicles is a power electronics system that converts the direct current from the HV-battery into the (3 Phase) alternating current controlling the motor. This of course is a very important component of any EV or hybrid vehicle. One of the major challenges that we are facing right now is that these power modules are completely new devices in automotive electronics, which are combinations of relatively new materials, e.g., epoxy-based molding compounds in combination with silicon carbide technologies, as well as new interconnection technologies based on silver sintering. Of course, we need to understand the complete interactions in a much better way. This is where advanced Digital Twins based on numerical simulations come in hand.

Dirk: What kind of sensors are you using to build the Digital Twin of the power inverter?

Przemyslaw: There are two categories of sensors. The first category includes real sensors, e.g., temperature sensors, vibration or motion sensors. The second category is virtual sensors, which allow us to measure or calculate different stress and strain states in the locations when real sensors cannot be applied.

Dirk: Prith, I understand this concept of virtual sensors is also something that ANSYS is very much focusing on. Can you tell us a little bit about where this fits in, in terms of the development phases?

Prith: At ANSYS we do detailed physics simulation. The different sensor categories that Przemyslaw is talking about are going back to fundamental physics, which we are simulating with numerical methods, such as the Finite Elements Analysis (FEA). FEA is a widely used method for numerically solving differential equations arising in engineering and mathematical modeling. If you are putting different sensors to a physical asset, you get many data, e.g., measuring vibration or pressure. The problem is that there are locations inside the physical asset where you cannot place a physical sensor. Take our example, the inverter. If you would put sensors to all the places where you want them, it would become technically impossible and prohibitive from a cost perspective. Therefore, in places where we cannot afford to place a real sensor for technical or cost reasons, we can logically assign a virtual sensor. In addition, this is where simulation comes in. We model the actual physics of the system using Finite Element Analysis to predict how a product reacts to real-world forces, vibration, heat, fluid flow, and other physical effects. The physics-based simulation of virtual sensors allows interpolation and extrapolation of the different values. In our example of a power inverter, we will put physical sensors where we can actually put the sensor. And then we will add the virtual sensors and we will essentially say, “Hey, if I were to have a sensor here, this is what the sensor would have produced.” Now, this virtual sensor needs to do the simulation based on some boundary conditions. So what we do is we look at the actual operating data. We look at the current and voltage of the inverter, the actual temperature, and the actual vibration of the car. We will take all those sorts of inputs as boundary conditions for our simulation.

Now, the next question is how detailed does the simulation have to be? If we would take a full 3D model as the foundation of the physics-based Digital Twins, this could be too much detail to master with realistic effort. This is why we are applying what is called Reduced Order Modeling (ROM). ROM is a very efficient method for reducing the computational complexity of mathematical models in numerical simulations. We can use ROM to design the virtual sensors, and to derive the values we will get from them in different situations. Finally, in the production system, we combine the outputs of the real sensors with the outputs from the virtual sensors by applying Machine Learning algorithms. And this is what gives us a highly accurate Digital Twin using real and virtual sensors together.

Dirk: Przemyslaw, how are you applying all this to your power inverter?

Przemyslaw: What is crucial here are the nonlinear simulations, especially the mechanical simulations. This is because the material behaves very differently, e.g., depending on the temperature and time. For the power inverter, we cannot apply physical sensors at all the places where we would like to have them. This is where the combination of nonlinear simulations, virtual sensors, and machine learning is giving us a real edge. This is the foundation for new applications such as the estimation of the state of health of the devices, including prognostics and health management for power electronics. Once we have this established, we can then even think about predictive maintenance for electronics systems.

Prith: Let me add to this. The typical way that people build Digital Twins is by attaching some sort of sensors to an asset, collect a lot of data, and then build an AI model based on that data. What we have found is that the accuracy of the purely ML-based analytics of a Digital Twin is approximately 80%. If you are doing a physics-based simulation of the Digital Twin, you can increase the accuracy to approximately 90%. Now, by combining the ML-based analytics with the physics-based approach into a hybrid Digital Twin – as Przemyslaw has described it in the work we are doing today with Bosch – you can actually increase the accuracy to up to 99%. This means that if you replace an asset worth a hundred thousand dollars based on a prediction that is only 80% accurate, this means you are likely to lose $20,000 on average. And that is a big business cost. If you can reduce that cost, that error to 1%, essentially this waste of $20,000 becomes reduced to only $1,000. That is the business value that we are producing with hybrid Digital Twins leveraging AI and physics-based simulation.

Dirk: The Digital Twin really supports the entire product life-cycle?

Przemyslaw: Yes, this is important. Digital Twins start with the design of our power devices. Then we track what happens during production. Finally, we start with the reliability assessment of our devices in the field until the component actually goes out of the field. And this is the moment when the Digital Twin will reach its end of use. In addition, that means Digital Twin for me, it is right from the beginning of the design process until the end of life of the device.

Dirk: During operations, where does the Digital Twin actually reside in your architecture?

Przemyslaw: That is a very important point. Especially for applications where the Digital Twin is applied to assets in the field, it is important to have them run on-board the asset, e.g., the car. This means that we are not relying on very large clusters for Digital Twin processing but can use the microprocessor or microcontroller that is running in the car. By implementing the Digital Twins in the car, we do not have to transfer all the data to remote cloud services.

Prith: When you build a very high-fidelity version of a Digital Twin, this would usually require a high-performance compute node, e.g., in the cloud. However, what we do is to build a simplified model and then export it. And it is this simplified twin that runs in a run time using docker containers on the edge with a very small memory footprint, as Przemyslaw said. Running it on the edge makes it possible for the twins to operate at the frequency of the real assets.

Dirk: Prith, in which industries do you see this being applied, predominantly?

Prith: We are currently focused on three broad use cases. One use case we just talked about is for electric vehicles. Another very big use case is for industrial flow networks in oil and gas, where we create a Digital Twin of an oil and gas network with lots of valves and compressors, etc. Another area is manufacturing, e.g., for large injection molding systems and other types of equipment in a factory. So there are lots and lots of applications of Digital Twins across different verticals.

Dirk: Thank you!

References

- ↑ Platform Industrie 4.0: Asset Administration Shell, https://www.plattform-i40.de/PI40/Redaktion/EN/Downloads/Publikation/vws-in-detail-presentation.pdf

- ↑ Digital Twin Consortium: The Definition of a Digital Twin, https://www.digitaltwinconsortium.org/initiatives/the-definition-of-a-digital-twin.htm

- ↑ Digital Twins Definition Language (DTDL), https://docs.microsoft.com/en-us/azure/digital-twins/how-to-manage-model