Verification and Validation

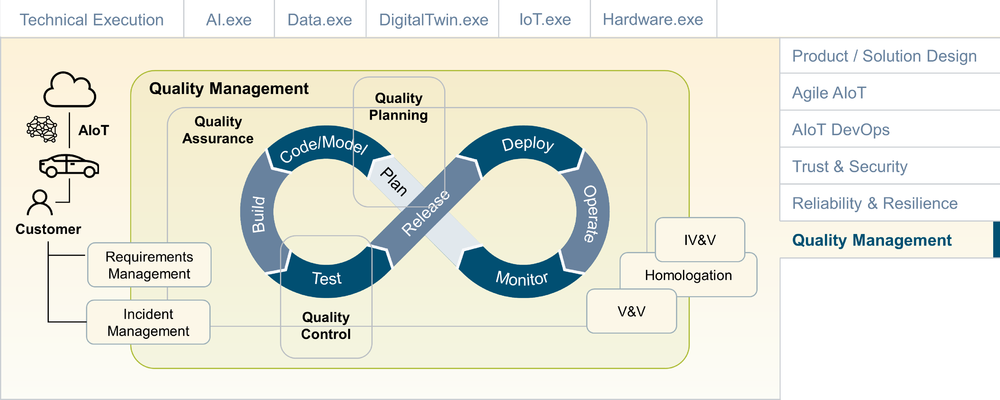

Quality Management (QM) is responsible for overseeing all activities and tasks needed to maintain a desired level of quality. QM in Software Development traditionally has three main components: quality planning, quality assurance, and quality control. In many agile organizations, QM is becoming closely integrated with the DevOps organization. Quality Assurance (QA) is responsible for setting up the organization and its processes to ensure the desired level of quality. In an agile organization, this means that QA needs to be closely aligned with DevOps. Quality Control (QC) is responsible for the output, usually by implementing a test strategy along the various stages of the DevOps cycle. Quality Planning is responsible for setting up the quality and test plans. In a DevOps organization, this will be a continuous process.

QM for AIoT-enabled systems must take into consideration all the specific challenges of AIoT development, including QM for combined hardware/software development, QM for highly distributed systems (including edge components in the field), as well as any homologation requirements of the specific industry. Verification & Validation (V&V) usually plays an important role as well. For safety relevant systems (e.g., in transportation, aviation, energy grids), Independent Verification & Validation (IV&V) via an independent third party can be required.

Verification & Validation

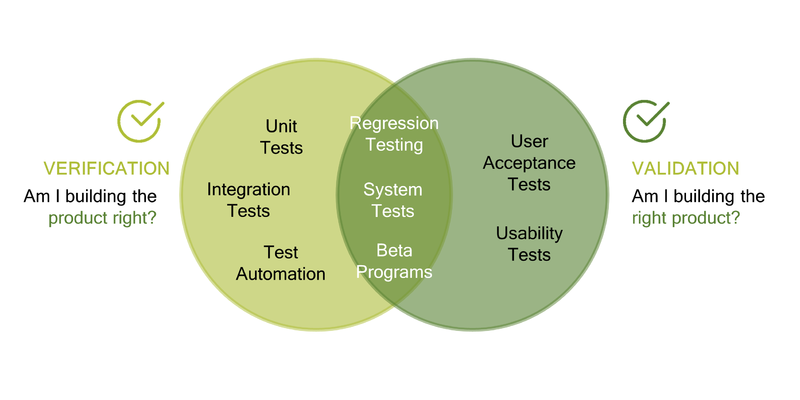

Verification and validation (V&V) are designed to ensure that a system meets the requirements and fulfills its intended purpose. Some widely used Quality Management Systems, such as ISO 9000, build on verification and validation as key quality enablers. Validation is sometimes defined as the answer to the question "Are you building the right thing?" since it checks that the requirements are correctly implemented. Verification can be expressed as "Are you building the product right?" since it relates to the needs of the user. Common verification methods include unit tests, integration tests and test automation. Validation methods include user acceptance tests and usability tests. Somewhere in between verification and validation we have regression tests, system tests and beta test programs. Verification usually links back to requirements. In an agile setup, this can be supported by linking verification tests to the Definition of Done and the Acceptance Criteria of the user stories.

Quality Assurance and AIoT DevOps

So how does Quality Assurance fit with our holistic AIoT DevOps approach? First, we need to understand the quality-related challenges, including functional and nonfunctional. Functional challenges can be derived from the agile story map and sprint backlogs. Non-functional challenges in an AIoT system will be related to AI, cloud and enterprise systems, networks, and IoT/edge devices. In addition, previously executed tests, as well as input from ongoing system operations, must be taken into consideration. All of this must serve as input to the Quality Planning. During this planning phase, concrete actions for QA-related activities in development, integration, testing and operations will be defined.

QA tasks during development must be supported both by the development team, and by any dedicated QA engineers. The developers usually perform tasks such as manual testing, code reviews, and the development of automated unit tests. The QA engineers will work on the test suite engineering and automation setup.

During the CI phase (Continuous Integration), basic integration tests, automated unit tests (before the check-in of the new code), and automatic code quality checks can be performed.

During the CT phase (Continuous Testing), many automated tests can be performed, including API testing, integration testing, system testing, automated UI tests, and automated functional tests.

Finally, during Continuous Delivery (CD) and operations, User Acceptance Test (UATs) and lab tests can be performed. For an AIoT system, digital features of the physical assets can be tested with test fleets in the field. Please note that some advanced users are now even building test suites that are embedded with the production systems. For example, Netflix became famous for the development of the concept of chaos engineering. By letting loose an "army" of so-called Chaos Monkeys onto their production systems, they forced the engineers to ensure that their systems withstand turbulent and unexpected conditions in the real world. This is now referred to as "Chaos Engineering".

Quality Assurance for AIoT

What are some of the AIoT-specific challenges for QA? The following looks at QA & AI, as well as the integration perspective. AI poses its own set of challenges on AI. And the integration perspective is important since an AIoT system, by its very nature, will be highly distributed and consist of multiple components.

QA & AI

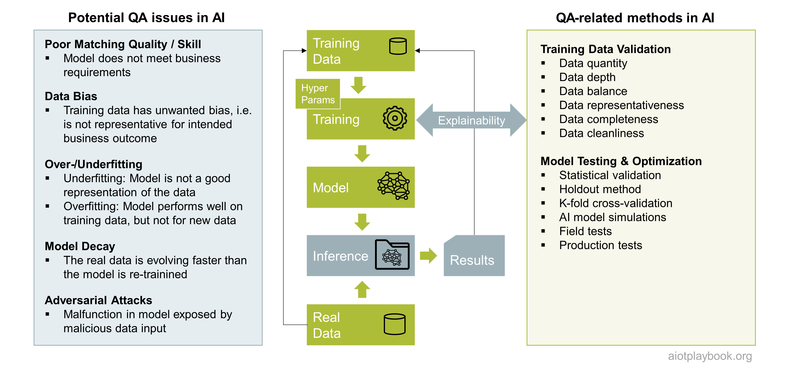

QA for AI has some aspects that are very different from traditional QA for software. The use of training data, labels for supervised learning, and ML algorithms instead of code with its usual IF/THEN/ELSE-logic poses many challenges from the QA perspective. The fact that most ML algorithms are not "explainable" adds to this.

From the perspective of the final system, QA of the AI-related services usually focuses on functional testing, considering AI-based services a black box ("Black Box Testing") which is tested in the context of the other services that make up the complete AIoT system. However, it will usually be very difficult to ensure a high level of quality if this is the only test approach. Consequently, QA for AI services in an AIoT system also requires a "white box" approach that specifically focuses on AI-based functionality.

In his article "Data Readiness: Using the 'Right' Data" [1], Alex Castrounis describes the following considerations for the data used for AI models:

- Data quantity: does the dataset have sufficient quantity of data?

- Data depth: is there enough varied data to fill out the feature space (i.e., the number of possible value combinations across all features in a dataset)?

- Data balance: does the dataset contain target values in equal proportions?

- Data representativeness: Does the data reflect the range and variety of feature values that a model will likely encounter in the real world?

- Data completeness: does the dataset contain all data that have a significant relationship with and influence on the target variable?

- Data cleanliness: has the data been cleaned of errors, e.g., inaccurate headers or labels, or values that are incomplete, corrupted, or incorrectly formatted?

In practice, it is important to ensure that cleaning efforts in the test dataset are not causing situations where the model cannot deal with errors or inconsistencies when processing unseen data during the inference process.

In addition to the data, the model itself must also undergo a QA process. Some of the common techniques used for model validation and testing include the following:

- Statistical validation examines the qualitative and quantitative foundation of the model, e.g., validating the model's mathematical assumptions

- The holdout method is a basic type of cross-validation. The dataset is split into two sets, the training set and the test set. The model is trained on the training set. The test set is used as "unseen data" to evaluate the skill of the model. A common split is 80% training data and 20% test data.

- Cross-validation is a more advanced method used to estimate the skill of an ML model. The dataset is randomly split into k "folds" (hence "k fold cross-validation"). One fold is used as the test set, the k-1 for training. The process is repeated until each fold has been used once as the test set. The results are then summarized with the mean of the model skill scores.

- Model simulation embeds the final model into a simulation environment for testing in near-real-world conditions (as opposed to training the model using the simulation).

- Field tests and production tests allow for testing of the model under real-world conditions. However, for models used in functional safety-related environments, this means that in the case of badly performing models, a safe and controlled degradation of the service must be ensured.

Integrated QA for AIoT

At the service level, AI services can usually be tested using the methods outlined in the previous section. After the initial tests are performed by the AI service team, it is important that AI services be integrated into the overall AIoT product for real-world integration tests. This means that AI services are integrated with the remaining IoT services to build the full AIoT system. This is shown in the following figure. The fully integrated system can then be used for User Acceptance Tests, load and scalability tests, and so on.

Homologation

Usually, the homologation process requires the submission of an official report to the approval authority. In some cases, a third-party assessment must be included as well (see Independent Verification & Validation above). Depending on the product, industry and region, the approval authorities will differ. The result is usually an approval certificate that can either relate to a product ("type") or the organization that is responsible for creating and operating the product.

Since AIoT combines many new and sometimes emerging technologies, the homologation process might not always be completely clear. For example, there are still many questions regarding the use of OTA and AI in the automotive approval processes of most countries.

Nevertheless, it is important for product managers to have a clear picture of the requirements and processes in this area, and that the foundation for efficient homologation in the required areas is ensured early on. Doing so will avoid delays in approvals that can have an impact on the launch of the new product.

References

- ↑ Data Readiness: Using the “Right” Data, Alex Castrounis, 2010

Authors and Contributors

|